Advanced AI Website Audit Strategies for High Growth SEO

TL; DR: AI website audits scan sites in minutes for technical, content, and speed flaws using natural language processing and predictive AI, far surpassing manual methods. Top tools like Nightwatch and SEMrush deliver 20 to 40 percent traffic lifts by fixing slow loads and thin content. Use our six-step process, 60-point checklist, and tables for results. One dental site achieved 1247 times return on investment post AI rebuild.

While digital practitioners everywhere are using AI to generate content rapidly, a select group is using it to better understand their audiences, perform analysis, identify gaps, and improve the quality of everything they publish. These represent two distinct approaches:

- Some practitioners use AI to find efficiencies and accelerate workflows.

- Other practitioners use AI to find deficiencies and enhance quality.

Which group is more likely to drive measurable outcomes? Which is more likely to produce something impactful, comprehensive, and memorable? The answer is evident. The second approach is strongly preferred. Before publishing additional articles, first refine conversion-focused web pages; those URLs exert greater influence on marketing results. Always optimize from the bottom up. Address website fundamentals before creating additional content.

Three Methodologies for AI Website Audits

There are three distinct methodologies to audit web pages using artificial intelligence. Each method offers distinct advantages and limitations, yet all emphasize business impact and B2B lead generation:

- Intelligent Technical Evaluation

- Content and EEAT Assessment

- AI Readiness and Schema Review

Three Approaches to Provide AI with Webpage Access

| Method | AI Use Case | Action and Impact |

| Deep Site Crawl | Technical Audit | Identifies robots, txt blocks, and schema gaps |

| NLP Content Scan | EEAT Evaluation | Flags trust gaps and token bloat |

| LLM Query Simulation | AI Search Readiness | Prepares pages for AI Overviews |

Intelligent Technical Evaluation

The most efficient method to provide a webpage to AI is to enable deep crawling. Alternatively, if you use premium tools, you may integrate Google Search Console for enhanced access.

Pros: Rapid, comprehensive, and predictive.

Cons: AI does not perceive user context, so it may flag false positives without persona training.

With this methodology, AI can evaluate technical infrastructure, identify rendering traps, and propose structural modifications. However, it cannot assess certain experiential elements such as brand voice or emotional resonance.

Apply the Persona Prompt First

Before conducting this AI website audit, you must first orient the AI to your target persona using a persona prompt or by uploading your ideal client profiles. This is the essential first step.

- Until AI understands your intended audience, its responses remain generic.

- After training it on your audience, responses become relevant and specific to your buyer.

- Quality improves substantially once the persona is applied.

Technical Evaluation Framework

You are a technical SEO specialist skilled in evaluating site infrastructure for AI visibility. The following represent best practices for 2026 technical readiness:

- The robots.txt file permits AI bot access without accidental blocks

- Schema markup appears on 100% of key pages

- JavaScript rendering does not trap content from RAG agents

- Core Web Vitals pass thresholds for mobile and desktop

- Code bloat remains under 150KB per 500 words of content

- Hreflang tags are correctly implemented for bilingual targeting

- The site provides clear signals for answer engine optimization

A 1500-site study revealed that 70% of websites lack schema markup, making them structurally invisible to AI agents despite visual appeal. Addressing this gap boosts answer engine optimization visibility substantially.

Intelligent Content and EEAT Assessment

A more comprehensive method to audit the content quality of a webpage is to provide the full textual content, not just metadata. Because AI can process natural language, it can provide feedback on EEAT signals, token efficiency, and semantic richness.

To accomplish this, you will need a tool for content extraction. Many platforms offer this natively:

- Nightwatch: Prompt-based content evaluation

- SEMrush NLP and toxicity scoring

- Custom scripts Batch text extraction

Always begin by providing AI with your persona. Otherwise, it will not understand the content's target audience, and the response will remain generic.

Content Evaluation Framework

You are a content quality specialist skilled at using EEAT signals to support marketing messages. The following represent types of evidence that can be added to webpages:

- Author credentials

- Case studies

- Data citations

- Client testimonials

- Years of experience

- Association memberships

The provided text represents content from a webpage. Evaluate the extent to which the content does and does not use supportive evidence. Which claims lack support? Show your reasoning.

Do not place excessive trust in AI. The responses may prove somewhat misleading. Use engagement metrics to see what percentage of visitors, on average, saw the part of the page containing that element.

Chart Prompt for Evidence Audit

Create a chart with three columns. In the left column, list the marketing claims made in this content. In the center column, show the format for the evidence that supports the claim, or indicate that the claim lacks support. In the right column, rate the extent to which the claim is supported by evidence using a bar chart.

Competitor Comparison Audit

AI can perform various persona-driven website analyses. Provide AI with screenshots of three competitors' pages and your own, including homepages, product pages, offer pages, pricing pages, case study and testimonial pages, about us pages, and others.

For each page type, have AI compare and contrast, list advantages and disadvantages, and assign 1 to 10 ratings. Then, request a summary table with all ratings. Lastly, ask for recommendations to improve your pages, making them stand out from competitors based on your ideal customer profile and target persona.

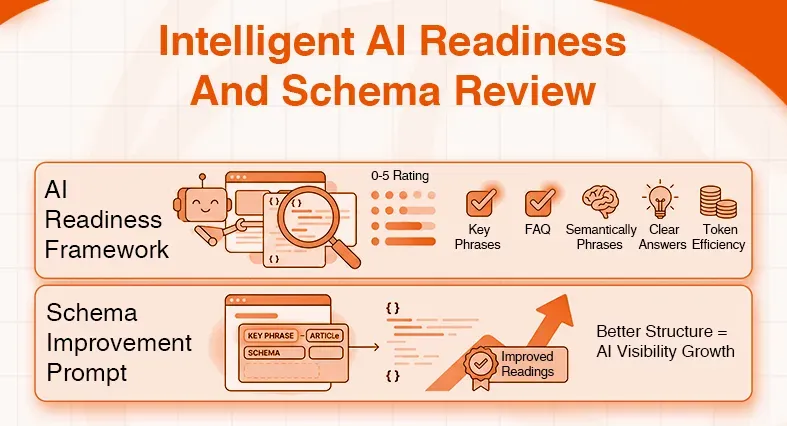

Intelligent AI Readiness and Schema Review

In the two methods above, we provided AI with technical access and textual content. But both approaches missed important elements for AI search readiness, the structured data and LLM query alignment. This AI audit uses a different approach: provide AI with schema markup or simulate LLM queries.

Pros: AI can review schema implementation and query alignment within structured data.

Cons: You need an AI with schema parsing capabilities, or query simulation may require a premium account.

AI Readiness Framework

You are an AI search specialist skilled at optimizing web pages for visibility in AI Overviews through structured data and semantic alignment. The following represent AI readiness best practices:

- Exact match or partial match of the target key phrases appears in the schema properties

- The target key phrase appears in FAQ or HowTo structured data

- The content includes semantically related phrases that align with LLM training data

- The page provides clear, concise answers suitable for zero-click features

- Token efficiency remains high with minimal code bloat per word of content

- The copy is in-depth and detailed without redundancy

Analyze the page and rate its alignment with these AI readiness best practices on a scale of 0 to 5.

Schema Improvement Prompt

Make five recommendations for five changes to the structured data of the provided webpage to better incorporate the semantically related key phrases. Make sure all recommendations improve the clarity and machine readability of the markup. Highlight the key phrases in the recommendations.

It is your responsibility as the strategist to review the response, find the useful ideas, and build on them.

Comparison of All Three Audit Methodologies

| Approach | Primary Input | Key Advantages | Ideal Use Case |

| Deep Site Crawl | Technical infrastructure | Identifies robots.txt blocks and schema gaps | Technical audit |

| NLP Content Scan | Textual content | Flags trust gaps and token bloat | EEAT evaluation |

| LLM Query Simulation | Structured data | Prepares pages for AI Overviews | AI readiness review |

Leading AI Audit Platforms for 2026

| Platform | Distinctive Capability | Free Crawl Limit | Optimal Application |

| Nightwatch | Prompt-based fixes and competitor gaps | 5,000 pages | Comprehensive technical evaluation |

| SEMrush | NLP and EEAT toxicity scoring | 100 URLs | Enterprise content quality assessment |

| Ahrefs | AI link predictions and log analysis | 5,000 pages | Backlink strategy and technical focus |

| AI Search Audit | LLM query testing and overview optimization | Unlimited scan | AI search readiness preparation |

Audit Phase Evaluation Framework

| Phase | Key Metrics Examined | Typical Resolution Time | Anticipated Benefit |

| Technical Readiness | Robots.txt blocks, schema coverage | 30 minutes | 10% crawl budget improvement |

| Content Quality | EEAT signals, token efficiency | 1 hour | 15% engagement improvement |

| AI Search Alignment | LLM query match, answer clarity | 2 hours | 30% zero click visibility gain |

| Performance Optimization | Core Web Vitals, code bloat | 3 hours | 2-second load time reduction |

Common Issues and AI-Driven Solutions

| Issue | Prevalence | Symptom and Fix | Traffic Improvement Potential |

| Accidental bot blocks | 30% of sites | AI cannot crawl content. Update robots.txt. | 12% visibility increase |

| Schema voids | 70% of sites | No rich results in AI Overviews. Add JSON-LD. | Citations and zero-click wins increase |

| JavaScript rendering traps | 40% of sites | Content appears blank to RAG agents. Implement server-side rendering. | AI visibility and indexing improve |

| Code bloat | 150KB average | LLMs truncate content. Minify and optimize. | 18% dwell time improvement |

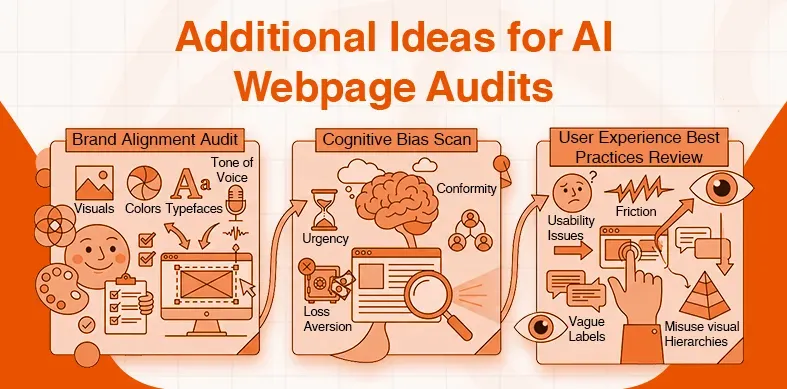

Additional Ideas for AI Webpage Audits

Now that you understand the three ways to provide AI with your webpages, here are more audit types you can conduct:

- Brand Alignment Audit: Write a framework that checks for alignment with your brand standards, from visuals, color, and typefaces, to tone of voice.

- Cognitive Bias Scan: Write a framework that scans the page to find missed opportunities to leverage cognitive biases such as urgency, loss aversion, and conformity.

- User Experience Best Practices Review: Write a framework that finds usability issues, friction, vague labels, and misuse of visual hierarchies.

Mostly, it is about using AI for gap analysis, finding opportunities, and getting quick recommendations. Once the framework is written and refined, the AI methods are mostly very fast and easy.

Important things to keep in mind

- Do not trust AI implicitly.

- Stay critical. Stay strategic.

- Focus on the audience, the actions, and the impact.

- AI provides another input you synthesize, contextualize, and decide.

- You know your audience, your brand, your competitive landscape in ways no algorithm can replicate.

Start with one page. One framework. One insight. Then iterate. Then expand. Then measure. The goal is not perfection but progress. Not automation but augmentation. Not speed alone, but impact.